How is Tamr Different from Traditional MDM and ETL Tools?

Editor’s Note: This post was originally published in June 2019. We’ve updated the content to reflect the latest information and best practices so you can stay up to date with the most relevant insights on the topic.

The data management industry is in a constant state of change, which can cause confusion. Companies collect enormous amounts of data at a rapidly expanding rate, but the old processes in place for organizing and mastering this data make it difficult to make sense of it all.

But as we know, unmastered data leads to a host of problems—from the inability to optimize business operations effectively and deliver quality customer experiences to increased exposure to data breaches and compliance risks.

In this blog post, we compare the key features of traditional, rules-based master data management (MDM) solutions with extract, transform, and load (ETL) and Reverse ETL solutions. We’ll discuss the benefits of Tamr’s AI-native approach to data mastering when compared with ETL, Reverse ETL, and traditional MDM.

Traditional MDM vs. ETL vs. Reverse ETL: What’s the Difference?

Historically, data mastering options included traditional MDM and ETL solutions. Let’s review the pros and cons of each.

Master Data Management (MDM): The traditional MDM process uses rules to create a single, master record that defines each core entity used across the organization. For example, you might find rules such as:

- “Bob” matches “Robert” in the name field

- -99 matches null in the salary field

- If systems A and B have different values for address, then use the one from system A

Pros and cons of traditional, rules-based MDM solutions include:

- Traditional MDM Pros: The process aims to provide a “golden record,” which is meant to be the “source of truth” about an entity that other sources can reference downstream. These systems also provide insight into the lineage of these records and flexibility in defining how these records are created. In theory, when done without errors, you should eliminate duplicates or unmatched data and provide consumption endpoints with an accurate view of each entity.

- Traditional MDM Cons: Maintaining rules is tedious, time-consuming, and requires significant human effort, making traditional MDM impossible to scale. Not only will its lack of scalability leave a large portion of your data unmastered, but you will also pay a premium in resource costs only to leave yourself susceptible to human error.

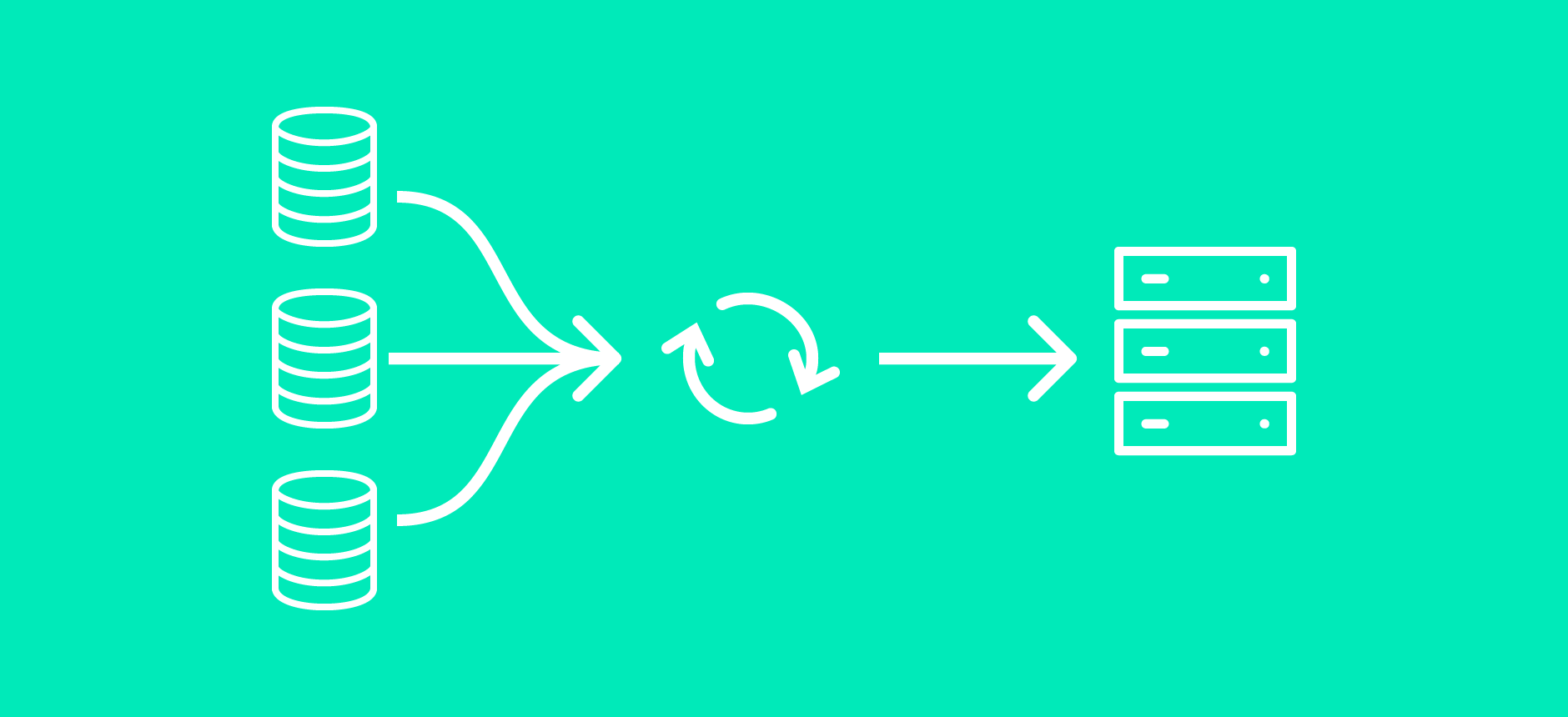

Extract, Transform, and Load (ETL): The ETL method is a widely adopted data mastering process that’s been around for 20+ years. It involves creating a global schema up front, often requiring technical expertise to understand how the data will be used across systems. From there, experts will develop conversion, cleansing, and transformation processes to standardize incoming data. They will also need to continuously maintain and update the schema to ensure it remains accurate and aligned with evolving business needs.

Pros and cons of ETL include:

- ETL Pros: When paired with an MDM solution, ETL is an effective way to transform unstructured data into structured formats as it moves it through the data pipeline. It can also master data when using simple rules such as “Inc.” = "Incorporated."

- ETL Cons: ETL on its own is not enough to master data and resolve entities. Given its labor-intensive approach, it’s impossible to scale using ETL. Therefore, ETL is not a viable option for companies that collect a large (and continuously growing) amount of data. ETL is also not designed to deliver a golden record, a key output of the mastering process that is necessary to drive consistency across consumption endpoints.

Reverse ETL: The inverse of ETL, Reverse ETL makes data available to business users by extracting data from a centralized source system, transforming it, and then loading it into their operational systems for use in day-to-day operations.

Pros and cons of Reverse ETL include:

- Reverse ETL Pros: Reverse ETL’s primary focus is to make data immediately available for use by business teams within their operating systems, without the need for technical expertise.

- Reverse ETL Cons: Reverse ETL applies simple, direct transformations to ensure data is in the appropriate format for operational systems. This process stands in contrast to ETL’s more complex transformations that structure data before loading it into a data warehouse or data lake for use in analytics. Reverse ETL takes the data as-is, and if it’s unmastered data, it just accelerates the proliferation of untrusted data across the enterprise.

For decades, traditional MDM, ETL, and Reverse ETL were the most widely used solutions for data mastering processes. But their manual, labor-intensive nature and inability to scale render them virtually useless when it comes to mastering enterprise data today.

An AI-Native Solution to Data Mastering

Using an AI-native master data management solution like Tamr, built with AI at the core, is the only viable solution to data mastering at scale. Through its powerful combination of machine learning, agentic AI, and human feedback, AI-native MDM cleans, unifies, and enriches your data, delivering the golden records needed to support analytical and operational use cases as well as AI initiatives. It overcomes the shortcomings of traditional MDM, ETL, and Reverse ETL solutions, allowing companies to master their key business entities in an efficient and effective manner.

Further, AI-native MDM platforms like Tamr employ the right AI models to continually evolve and update as your data changes and grows, allowing you to tackle complex data mastering and data curation challenges.

Bridging the Gap Between Data, Analytics, Operations, and AI

Tamr’s API-first approach to integration enables it to easily connect to internal and external datasets including CRM and ERP systems, external reference data aggregators, and third-party enrichment providers. Using proprietary, patented machine learning technology and agentic AI, Tamr produces high-quality, up-to-date, curated golden records that are ready for consumption in downstream analytics, operational systems, and AI applications.

In addition, Tamr incorporates AI agents into the data mastering process, enabling them to play an active role in curation by analyzing and providing insights about unresolved data, organizing items in queues, and identifying and escalating exceptions for human review. Using this new, promising approach to data mastering and data curation, Tamr enables organizations to improve data quality at scale while shortening the last mile of curation before data is put to use.

Get a free, no-obligation 30-minute demo of Tamr.

Discover how our AI-native MDM solution can help you master your data with ease!