How To Leverage Ontologies with your Mastered Data

Ontologies are used across industries in order to share information in a standardized manner. At its core, an ontology is a shared, explicit and formal domain model for representing entities in one’s data and organization, and it serves as a common vocabulary for human consumption. By mapping respective entities to the shared ontology, distinct groups are able to share knowledge and data more efficiently, and automated systems can comprehend the data without further modifications.

While it is widely accepted that ontologies are useful, many organizations struggle to maximize their potential. For this reason, we have developed an ontology-enabled analytic data model for bridging the gap between unified, mastered data and industry-specific ontologies. Using this model, Tamr users can manage their ontologies directly within the Tamr platform and export analytics-ready datasets to their analytics or BI tool of choice.

The ontology-enabled data model is agnostic to the data type, ontology and BI tool for consumption. To showcase the workflow of this analytic data model, we will use financial service industry data and the Financial Industry Business Ontology (FIBO). The ultimate goal is to define Tamr-curated entities and the relationships between them in FIBO and to enable consumption of the standardized data in a graph database. From the graph database, the user can visualize their entities in a knowledge graph, a representation of data as nodes and edges, and traverse the graph using their ontology.

Conceptual model of data “hooked” to FIBO. Tamr-curated entities (Johnathan Smith, ABC Corporation, XYZ Incorporated, and 9 Main Street) are hooked to their entity types in the ontology graph, and relationships between them are named per FIBO.

Getting started with the Financial Industry Business Ontology (FIBO)

The Financial Industry Business Ontology is an RDF graph model of standard financial entities and relationships between them. To traverse FIBO and make it usable within Tamr, we first ingest the ontology into Neo4j, a graph database. From there, we extract two things from the ontology: an entity class taxonomy for classifying entities from our data and a direct relationship map (see Appendix).

The scripts to extract the taxonomy and relationship map from the Financial Industry Business Ontology are available on GitHub.

Tamr ontology workflow.

Tamr Workflow

In the Tamr platform, we begin by mastering all entities that exist in our source datasets. In this example, we use Anti-Money Laundering / Know Your Customer datasets (Panama Papers, GLEIF), which includes companies, addresses and people. We then build golden records on each mastering project. These golden records contain the most trustworthy and complete entity information and will become the nodes in our knowledge graph.

Next, we classify these golden records into the FIBO Taxonomy that we extracted from the ontology. Subject matter experts classify a few golden records into the taxonomy and Tamr’s machine learning algorithm leverages that user feedback to classify the rest of the golden records.Finally, everything comes together in a schema mapping project that leverages the Tamr transformations feature. We start with the source datasets, which contain the relationships between entities, and replace the source and subject of each relationship with the golden records of our mastered entities. We then add their FIBO taxonomy classification path, as well as the corresponding relationship from the FIBO relationship map. The relationships will constitute the edges of the knowledge graph. The result is an analytics-ready dataset, ready to be consumed by a graph database.

Visualizing the Data on a Graph

We stream the unified dataset directly from Tamr into Neo4j using the plugin APOC, “Awesome Procedures On Cypher”, which includes an external API connector. With a few simple cypher queries, the data can be loaded into Neo4j and hooked onto the Financial Industry Business Ontology.

First, we explore the impact of Tamr’s entity mastering on the graph view of the data.

Query for company “DABJAM” and all its connections before Tamr mastering:

Before Tamr Mastering, you see 25 DABJAM entities with connected addresses, but we cannot determine entity type or relationship type, nor that all of these company nodes are actually the same company.

Query for company “DABJAM” and all its connections after Tamr mastering:

After Tamr Mastering, all DABJAM nodes have been reconciled into one curated node, with curated entities (addresses and people) connected to it with well-defined relationships.

Then we can explore the knowledge graph through the lens of ontology.

After Tamr, we can also see these entities hooked onto the ontology per their entity type, and with corresponding relationships named based on ontology vocabulary.

Once the data is hooked to the ontology, we can use its hierarchical structure to perform counts of all entities within subclasses of a higher-level entity type, or view connections between entity types within subclasses of a high-tier field.

Tamr’s impact on graph database solutions is extreme – mastering and deduplicating data is essential to building meaningful knowledge graphs and seeing the full extent of the connectedness of the entities in your enterprise. Incorporating your ontologies into Tamr projects allows the unified data to be consumed by every other tool used across the enterprise, and across different enterprises in an industry.

Appendix

Creating the FIBO Taxonomy

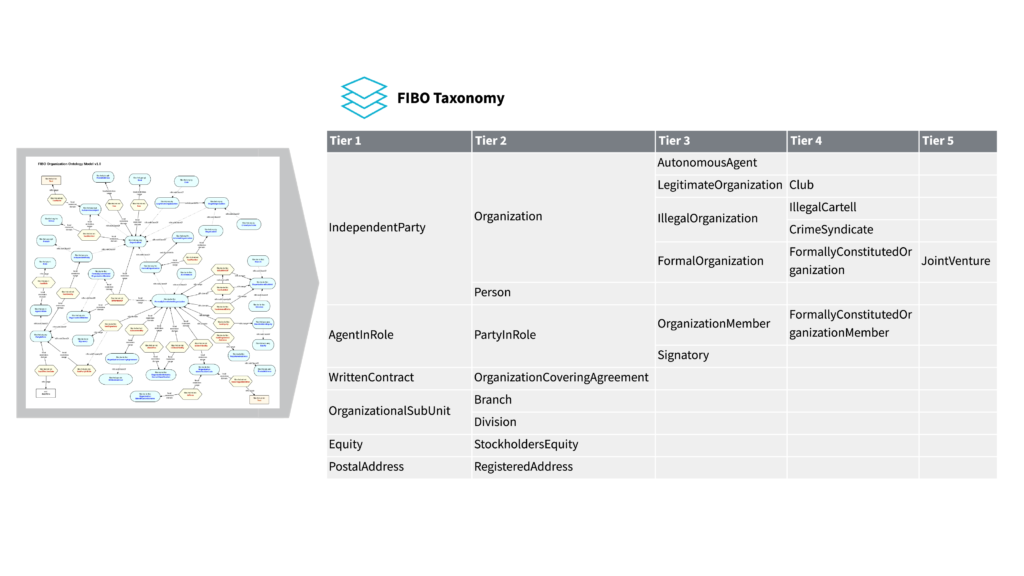

Example of the extraction of FIBO Taxonomy creation from a subset of the ontology graph.

From FIBO, we extract classes (entity types) into a hierarchical taxonomy by first iterating through the ontology to determine top tier entities by finding all class nodes which are not a subclass ([:SCO] relationship type in the Neo4j FIBO representation) of any other class node. These become the tier 1 classifications of the taxonomy we are building. From each of these tier 1 nodes, we then iterate down the hierarchy of subclasses, finding all entities that are a subclass of that top tier node as tier 2, subclasses of those as tier 3, and so on.

FIBO extends out to 12 tiers, leaving us with an extremely large taxonomy., so for the purposes of implementation, We therefore often parse down this taxonomy into relevant class types by only retaining the tier 1 classifications and corresponding subtrees for the determining which entities might exist in our dataset and retaining only the tier 1 classifications and their subtrees that are relevant. For contextual purposes, we always maintain every subclass hierarchy of a maintained tier 1 classification.

Extracting the FIBO Relationship Map

FIBO allows us to define direct relationships between entity types based on the class of the source and subject (start and end) of the relationship. We iterate through the graph ontology once again, extracting each relationship node from the RDF, as well as its source and subject class types and the direction of the relationship (unidirectional or bidirectional). This will enable us to draw the graph of our data as it would be represented by the ontology. From this, we will be able to use the source and subject as a key to determine the name and direction of a given relationship.

Example of the extraction of FIBO Relationship Map extraction from a subset of the ontology graph.

Interested in learning more about ontologies and why they are the role they play in unifying different data sets? Read my next post, A Data Scientist’s Rosetta Stone: Unifying Disparate Data with Ontologies.

Get a free, no-obligation 30-minute demo of Tamr.

Discover how our AI-native MDM solution can help you master your data with ease!