Why Your Manufacturing Digital Transformation Initiative is Stalled

So what’s the problem?

Every manufacturer I have engaged with in the past 10+ years claims to be data-driven and almost all of them are investing in “Digital Transformation and Industry 4.0” initiatives. These initiatives have business-critical goals like better asset utilization, inventory reduction, improving quality and throughput or optimizing their supply chain. The required Key Performance Indicators (KPIs) like OEE, MTTF, OTD, FPY are all well understood by Kaizen teams ready with a commitment to continuous improvement. Given that much of manufacturing is digitized and with the adoption of IoT on top of “smarter” machines, the stage appears to be set for manufacturing analytics to provide the critical insights every business seeks.

The unfortunate reality is that almost all of these analytics-driven initiatives stay in the crawl-stage for too long and fail to deliver on the promise in a reasonable period of time. Why?

While there is no silver bullet, one big issue is that manufacturers overestimate data quality and underestimate the variety of data in their application data silos. Their overall initiative allocates time/effort to unify and contextualize data, which is a foundational requirement, but they find out that data is way more fragmented, inconsistent and heavily reliant on “difficult to schedule” subject matter experts from the business. Often, it is after several months of a project that companies realize that they are a long way away from operationalizing digital transformation and driving analytics.

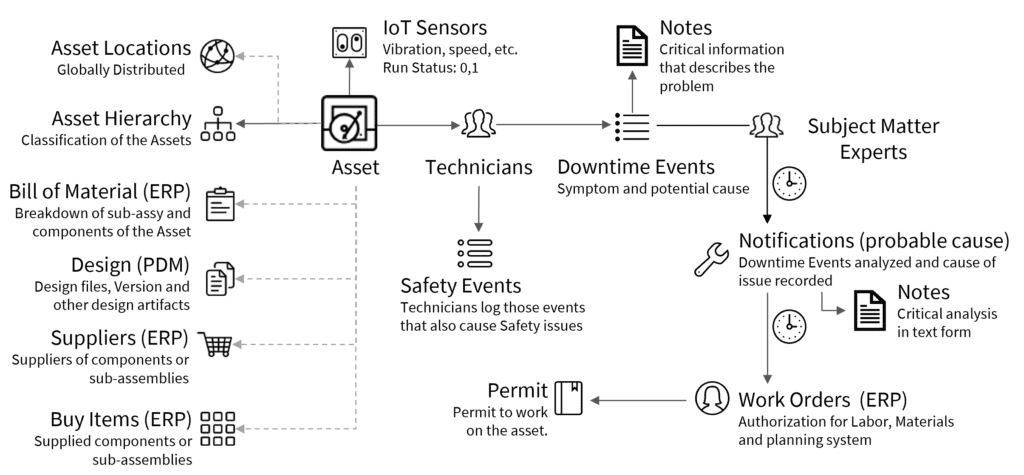

This should not be a surprise if you consider the mind-numbing number of business-critical applications that a typical manufacturer has to deal with to run their business. Each application within the lifecycle domain of a business (individual blocks below) may be optimized, but a digital transformation project has to stitch together data streams across domains in order to deliver business value.

How do manufacturers solve the problem?

In my experience most Digital Transformation projects are saddled with data unification and integration approaches that are dated, don’t scale, and are highly resource intensive. Many manufacturers are sold on “application integrations” by application vendors or system integrators, which may be the answer for some applications, but they are not the solution to solve every problem. Others aggregate data into lakes and then employ a small army of data engineers to write 1000s of business rules to compensate for data quality and variety. In a static world, this may still cut it, but it is shown that 85% of projects with deterministic rules (traditional MDM) fail eventually to meet their stated goal. So, if yesterday’s solutions don’t really work, what does?

Tamr recognized that the problems created by data variety are precisely what machine learning can solve in an agile way – data mastering at scale. We have proven over the past few years that our probabilistic model-based approach which is expert-guided, scales quickly, is far less resource intensive and delivers value quickly by feeding your analytics or downstream applications with structured, unified data.

Bolted-on application Integrations are still needed. Traditional MDM projects with deterministic-rules still have a place in your tool kit. But you need to look to machine learning to accelerate your projects or to take on those problems that are cross-domain which have very compelling business value.

So, what does this look like in real life?

Let’s take an example of one of our customers in the oil and gas industry investing in a key initiative to improve the reliability of their off-shore drilling equipment. Improving reliability from 0.1 to 1% directly impacts bottom line productivity to the tune of “several hundred million dollars” which makes this a priority for them. In addition, more reliable equipment was estimated to further save $90M in maintenance spend if they could get more preventative/predictive in their planning. Why is this so important to them today?

The urgency to understand root causes and get more predictive is because 50% of global production comes from assets beyond their midpoint of the asset life cycle which implies an increased risk of equipment (asset) failures. But here is the silver lining – operations, supply chain and back-office is digitized and are sitting on huge piles of data (10+ years’ worth) that is begging to be analyzed to understand common causes for failures across equipment and locations. Their project goals are straightforward and the attack plan is quite intuitive.

- Identify “bad actor” equipment through downtime events

- Cluster similar events on each Asset (equipment) and match them to probable root cause(s) from the Notifications that were generated downstream (see illustration below)

- These “high-value” clusters in aggregate, potentially point to faulty components and/or processes, which can then systematically be analyzed to drive improvements in reliability and utilization

- When additional metrics like Mean time to Failure (MTTF), Mean time between Failures (MTBF), Overall Equipment Efficiency (OEE) can be tracked reliably for these “bad actor” equipment/components, they can help drive a more optimal maintenance schedule

- In addition, they also want to look at operator safety notices related to the equipment failures to drive a safer work environment

When they engaged Tamr to tackle this problem, they had already spent more than a year trying to clean up and harmonize the data to drive analytics but the going was slow. If this sounds familiar, you may recognize reasons as well: data silos, inconsistent data entry, data from multiple locations in different formats, IoT data that did not line up with operational downstream data, and high value root-cause information trapped inside technical notes. Their data map to solve what appeared to be a straight-forward issue looked something like this.

Each of the entities and related data shown in the illustration above were practically in their own silos which made it challenging to feed their data analysts with clean data. Their approach, which is not that different from most, was an attempt to look for patterns to cluster downtime events to an asset using custom rules. Any output of this exercise required validation by subject matter experts (senior technicians) who were required to scan rows of data on an excel spreadsheet and provide feedback. This was deemed an unscalable approach given that it took a senior technician an hour to review 20 records, just for a single site, not to mention a low value activity for precious resources to do tasks with diminishing returns. Furthermore, progress was slowed because of the start-stop iterations between IT/Data Scientists and subject matter experts.

How can Tamr help?

We took on the challenge of proving out the Tamr platform on a sample set of their data from one location. Our goal was to show how our probabilistic model-based approach could identify clear patterns (high value clusters) in their data with minimal (but mandatory) input from a couple of their subject matter experts. The effort included some data prep, primarily to exclude data that was not required and to clean up some known inconsistencies. But what amazed our customer was that in a matter of a couple of weeks we proved the following:

- No “business” rules were required to be coded. Only a clear understanding of the data silos and relationships to setup Tamr’s machine learning model

- Only a couple of iterations of the model, with subject matter expert input, were needed to show value. Their experts simply had to answer Yes/No to “Pair Matching” questions on a simple, intuitive user interface, no spreadsheets required

- Tamr mapped ingested dataset schemas to a unified schema and suggested mappings showing how new datasets from other sites or any other data silo can be added quickly to the pipeline

- Tamr’s machine learning model generated high-value clusters from the unified sample data which included extracting signals from technicians notes in the dataset. A light bulb went off in our customer’s head that no number of rules or human effort can incorporate this at scale

What was the result?

The end-result of our proof-of-concept project was that Tamr clustered more than a 1/3rd of the failure events in the sample data with an 87% accuracy as validated by their subject matter experts. We were able to clearly show that Tamr can quickly generate clean data that is ready for expert analysis to determine common root-causes of failures of their assets.

Even as I write this, Tamr onboarded data from 2 more sites and generated high-value clusters within a week by reusing the model from the first project. That in a nutshell proved the agility and scalability of Tamr to our customer. It gave them the confidence that Tamr can power their analytics to answer the sequence of questions that are critical to generating transformational business value.

- Improve equipment reliability (thus utilization)

- Which equipment fails the most?

- Which components fail the most in equipment?

- Which suppliers do we buy the above components from?

- Which designs fail more?

- Improve Maintenance Planning (towards a path to get more predictive)

- Which equipment needs preventive maintenance and which doesn’t?

- Average time to fix a type of equipment? (MTTR)

- How much spares, inventory do we need to stock and in which location?

- Can we develop a standard schedule by Asset Class, Type, Instance?

- Can we get more predictive to avoid critical failures with the highest downtime?

An example of actionable analytics Tamr can enable is shown below. This analytic breaks down Total Loss due to Asset downtime by:

- Location – is downtime the same across locations for a particular asset class

- Suppliers – which suppliers should we work with to improve component reliability

Probable Root Cause – what are the common causes for downtime for each asset class

Our technology aligns well to digital transformation initiatives and with some sample data, can be demonstrated to you in a matter of a few weeks. This radically different approach may be a great tool to include in your toolkit. If you are interested in learning more, feel free to reach out to schedule time with one of our data mastering experts.